Geography of Hidden Faces

Concept & Development

Joey Lee

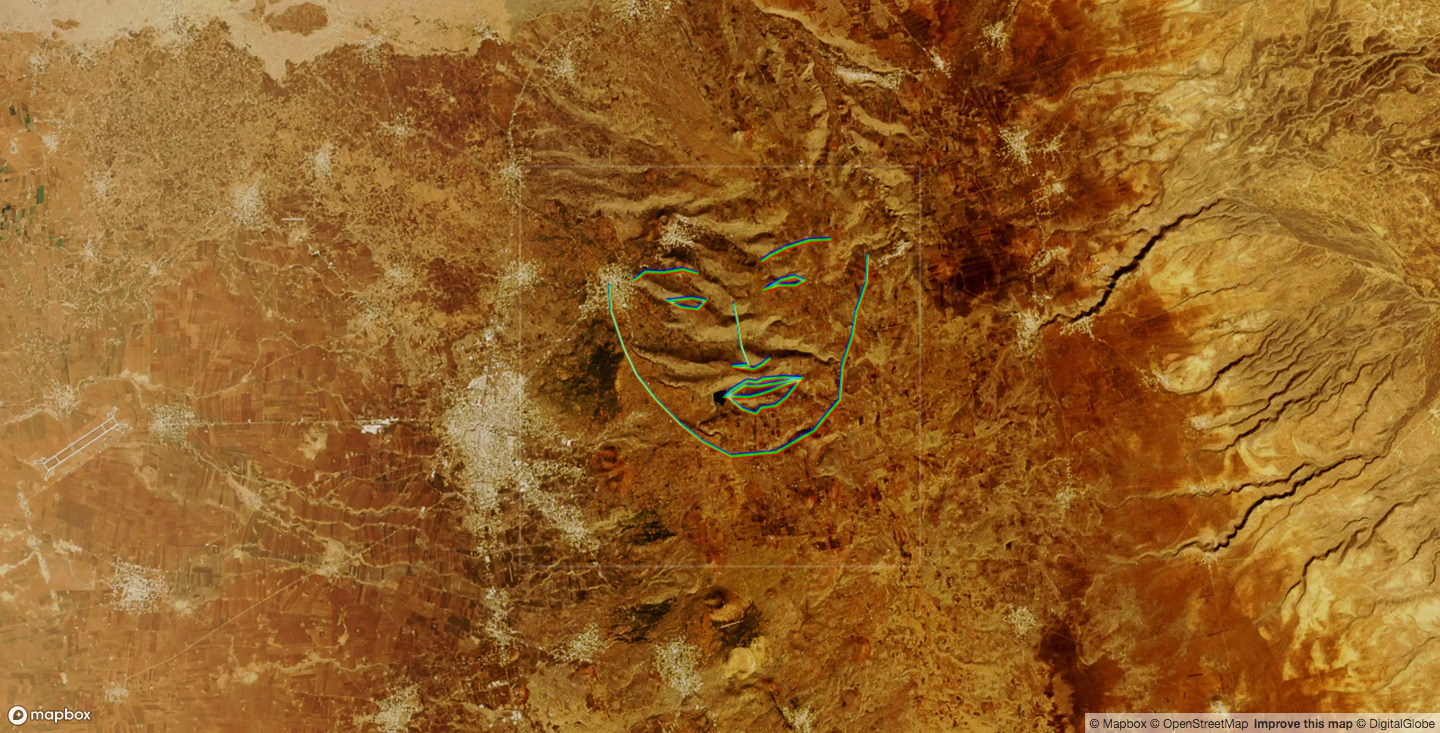

Geography of Hidden Faces applies AI to aerial imagery to “see” faces in the landscape.

This project applies machine learning based facial recognition algorithms on aerial imagery to find “faces” on earth’s surface. This exercise produces surprising results—faces are detected in landscapes in often unintuitive and sometimes unexplainable ways. What do the algorithms see in those pixels that we don’t?

The facial recognition algorithms used in this project are accessed through the face-api.js application programming interface (API) implemented in ml5.js. At its core, the facial recognition and detection model is built on top of ~30,000 images. In total there are ~390,000 faces in the dataset—WIDER FACE dataset—which were used to create a model capable demarcating 68 key face points, also known as “face landmarks”. When these algorithms are pointed at images of people, these models generally perform well, meaning that they can quickly detect faces and their landmarks, however it must be acknowleged that accuracy for facial recognition algorithms tend to perform worse for women and people of color.

For this project, the minimum threshold for detections was lowered to 0.0001, meaning that nearly anything that the facial recognition model might believe to be a face is returned to the viewer.

Works such as Philipp Schmitt’s “A Computer Walks into a Gallery“ or “Introspection” play with this idea of purposefully feeding abstract data to machine learning models. The exercise offers a means of interrogating otherwise blackbox systems and architectures (the layers of the neural networks). It creates opportunities to question the impressive and opaque mechanics of what Kyle MacDonald has aptly stated as, “programming with examples versus with instructions.” What happens to those pixels when they are mashed up, sorted, and arithmetically pummeled with calculus? I would argue that these interventions offer signals of those layers of computational abstraction in a way that can and must be seen and felt.

→ See the project and read more at geo.hidden-faces.net